Agentic AI Frameworks: How to Choose the Right One

Compare the top agentic AI frameworks by orchestration style, production readiness, and team fit. Practical decision guide for LangGraph, CrewAI, and more.

By: Deepit Patil

Co-Founder and CTO

Published

Updated

Edited by Craze Editorial Team · See our Editorial Process

A new agentic AI framework seems to launch every week. LangGraph, CrewAI, AutoGen, Microsoft Agent Framework, OpenAI Agents SDK, DSPy, LlamaIndex… the list keeps growing. And every comparison article gives you the same output: a feature table, a few paragraphs per framework, and a vague “it depends on your use case.”

That’s not useful when you’re trying to make a real decision.

By the end of 2026, Gartner predicts that 40% of enterprise applications will include task-specific AI agents, up from less than 5% in 2025. That growth means more teams are facing the framework question right now. This guide helps you answer it by focusing on the thing that actually matters: how your framework orchestrates work.

TL;DR

- Agentic AI frameworks provide the building blocks for autonomous AI agents: orchestration, memory, tool calling, and human-in-the-loop controls.

- Your choice depends on orchestration style: graph-based (LangGraph) for production control, role-based (CrewAI) for fast prototyping, or conversational (Microsoft Agent Framework) for reasoning and consensus tasks.

- Production readiness goes beyond features. Look for observability, state persistence, error recovery, and deployment support before committing.

- The landscape is consolidating. Microsoft merged AutoGen and Semantic Kernel into a single Agent Framework (GA April 2026), and MCP is becoming the standard for tool integration.

- Start with the simplest approach that works, then add framework complexity when you hit real limits.

What Are Agentic AI Frameworks?

An agentic AI framework is a software toolkit that gives you the building blocks for creating AI agents that can perceive, reason, plan, and act on multi-step tasks. Instead of writing all the orchestration, memory management, and tool integration logic from scratch, you use a framework to handle the plumbing so you can focus on what your agent actually does.

The core building blocks in most frameworks include:

- Orchestration: How the agent decides what to do next, in what order, and when to stop

- Memory: Short-term (within a conversation) and long-term (across sessions) context management

- Tool calling: Connecting agents to external APIs, databases, search engines, and other services

- Human-in-the-loop: Checkpoints where a person can review, approve, or override the agent’s actions

- Planning: Breaking complex goals into smaller steps the agent can execute sequentially or in parallel

Frameworks are different from no-code agent builders , which let you create agents through visual interfaces without writing code. Frameworks give you more control and flexibility, but they require programming knowledge and more setup time.

They’re also different from calling an LLM API directly. Direct API calls work fine for simple, single-turn tasks. But once you need multi-step reasoning, tool use, memory across sessions, or coordination between multiple agents, a framework saves you from reinventing infrastructure that others have already built and tested.

Understanding the internal design patterns behind these frameworks helps too. If you’re curious about how agents are structured under the hood , that context will make the orchestration styles below easier to evaluate.

Three Orchestration Styles That Define Your Choice

Here’s where most framework comparisons go wrong: they compare frameworks feature by feature, as if all frameworks solve the same problem the same way. They don’t.

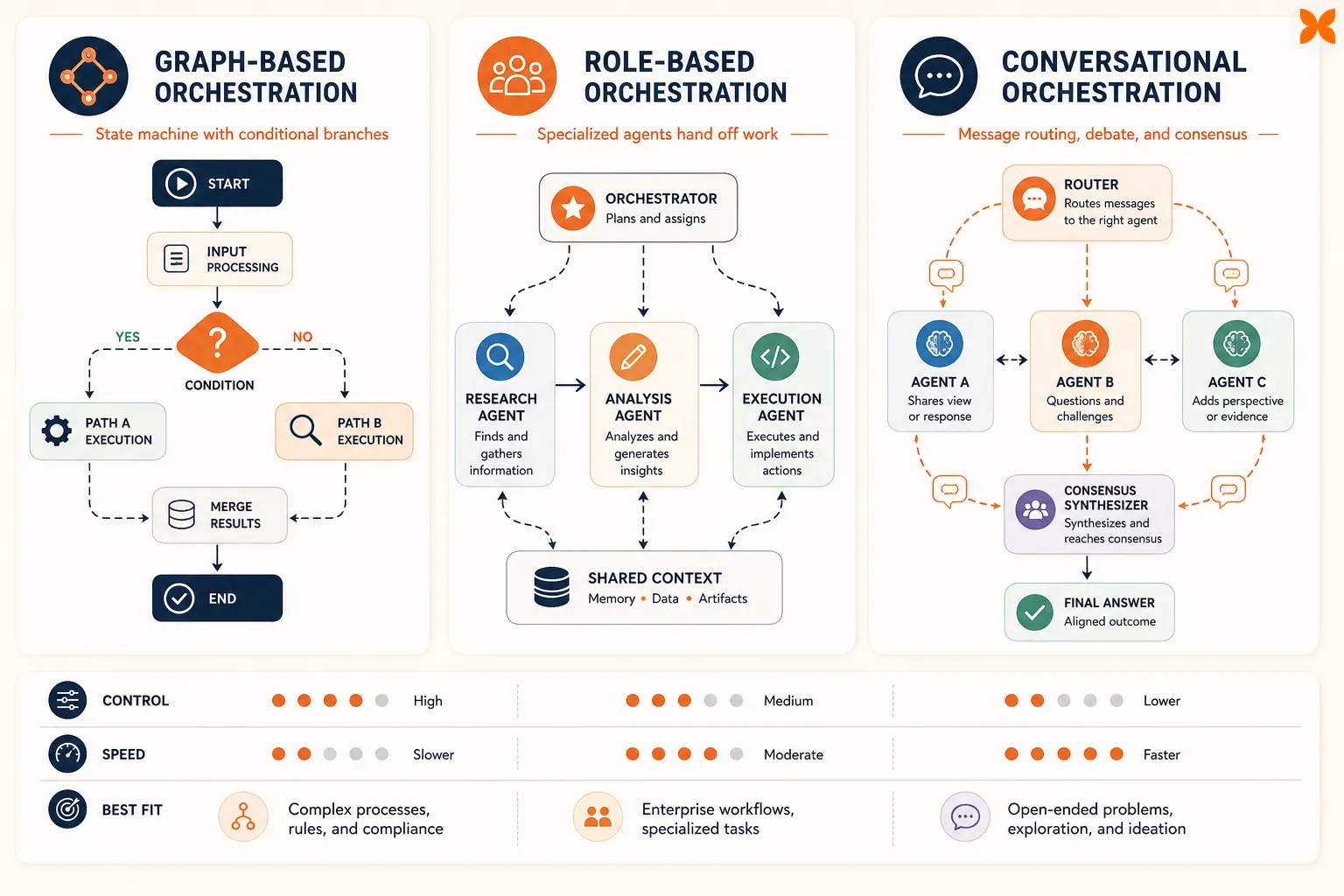

Every agentic AI framework is built around one of three orchestration styles. Picking the right style for your use case matters more than picking the most popular framework. Once you know which pattern fits your problem, the framework choice often becomes obvious.

Graph-Based Orchestration

Graph-based frameworks model your agent’s workflow as a state machine: nodes represent actions or decisions, edges define the flow between them, and the framework tracks state at every step.

You define a graph where each node is a function (call a tool, ask the LLM, check a condition) and edges determine what happens next based on the output. The framework manages state transitions, checkpointing, and replay.

This pattern works best for:

- Complex multi-step workflows with conditional branching

- Regulated industries where you need audit trails and deterministic execution

- Production systems that require observability and debugging

- Workflows where a human needs to review intermediate results before the agent continues

LangGraph is the dominant graph-based framework, with roughly 400 companies running it in production, including Klarna, Uber, LinkedIn, and BlackRock. It reached v1.0 GA in October 2025 and offers built-in state persistence, human-in-the-loop checkpoints, and integration with LangSmith for step-by-step tracing.

The trade-off: graph-based orchestration gives you the most control, but it requires the most upfront design work. If your workflow is simple (a single agent with a few tools), this pattern adds unnecessary complexity.

Role-Based Orchestration

Role-based frameworks structure agents as a team. Each agent gets a role (researcher, writer, reviewer), a goal, and access to specific tools. The framework handles delegation and coordination.

You define agents with roles and backstories, assign them tasks, and let the framework manage the handoffs. Think of it like assembling a small team where each member has a specialty.

This pattern works best for:

- Rapid prototyping of multi-agent workflows

- Collaborative tasks where agents need different specialties (research + writing + editing)

- Scenarios where you want to get something working quickly and refine later

- Teams that prefer a mental model closer to human team structures

CrewAI is the leading role-based framework. It has the lowest barrier to entry among multi-agent frameworks. In 2025, CrewAI added Flows, an event-driven pipeline mode that bridges the gap between prototyping and production workloads.

The trade-off: role-based frameworks sacrifice fine-grained execution control for speed of development. You get less visibility into exactly why an agent made a specific decision, and debugging complex multi-agent interactions can be harder than with graph-based approaches.

Conversational Orchestration

Conversational frameworks use message passing as the primary coordination mechanism. Agents communicate by sending messages to each other, debating, and reaching consensus.

Agents are participants in a conversation. One agent proposes a solution, another critiques it, a third synthesizes the feedback. The framework manages the message flow, turn-taking, and termination conditions.

This pattern works best for:

- Tasks that benefit from debate and iterative reasoning

- Consensus-building across multiple perspectives

- Code review, research synthesis, and analysis where multiple viewpoints improve quality

- Azure and .NET environments where Microsoft ecosystem integration matters

Microsoft Agent Framework (the merger of AutoGen and Semantic Kernel) is the main conversational framework. It reached 1.0 GA in April 2026, unifying two previously separate projects into a single SDK that supports both Python and .NET.

The trade-off: conversational orchestration excels at reasoning-heavy tasks but can be inefficient for structured, deterministic workflows. Message-passing coordination adds latency and token costs compared to graph-based execution.

With these three patterns in mind, you can narrow your framework search significantly before looking at individual features. The next step is comparing the leading frameworks side by side on the criteria that matter most.

Framework Comparison Table

This table covers the six frameworks worth evaluating in 2026. There are other options, but these represent the strongest choice in each orchestration category.

| Framework | Orchestration Style | Best For | Languages | Production Readiness | MCP Support | Key Strength | Key Limitation |

|---|---|---|---|---|---|---|---|

| LangGraph | Graph-based | Complex production workflows, regulated industries | Python, JS/TS | High (v1.0 GA, LangSmith tracing) | Native | State persistence, checkpointing, auditability | Steeper learning curve |

| CrewAI | Role-based | Rapid prototyping, team-based workflows | Python | Medium (Flows added for production) | Via adapter | Fastest time to working prototype | Less execution control than graph-based |

| Microsoft Agent Framework | Conversational | Azure/.NET teams, debate/consensus tasks | Python, .NET | High (1.0 GA April 2026) | Native | Unified Microsoft ecosystem, enterprise support | Newer GA, smaller community than LangGraph |

| OpenAI Agents SDK | Single-agent pipeline | OpenAI-model workflows, simple agent tasks | Python | Medium | Via adapter | Simplest setup with OpenAI models | Locked to OpenAI ecosystem |

| LlamaIndex | Data-centric | RAG-heavy agents, knowledge retrieval | Python, TS | Medium | Via adapter | Best data ingestion and retrieval pipeline | Weaker multi-agent orchestration |

| DSPy | Optimization-based | Prompt optimization, systematic agent tuning | Python | Low-Medium | Limited | Programmatic prompt optimization | Steep learning curve, research-oriented |

A quick note on what’s not in the table: frameworks like MetaGPT, OpenAgents, and Haystack serve more specialized niches. They’re worth exploring if your use case aligns closely with their specialty, but for most teams evaluating frameworks, the six above cover the primary orchestration patterns.

Of course, knowing which framework exists is only half the decision. The other half is understanding whether it’s actually ready for what you need to build.

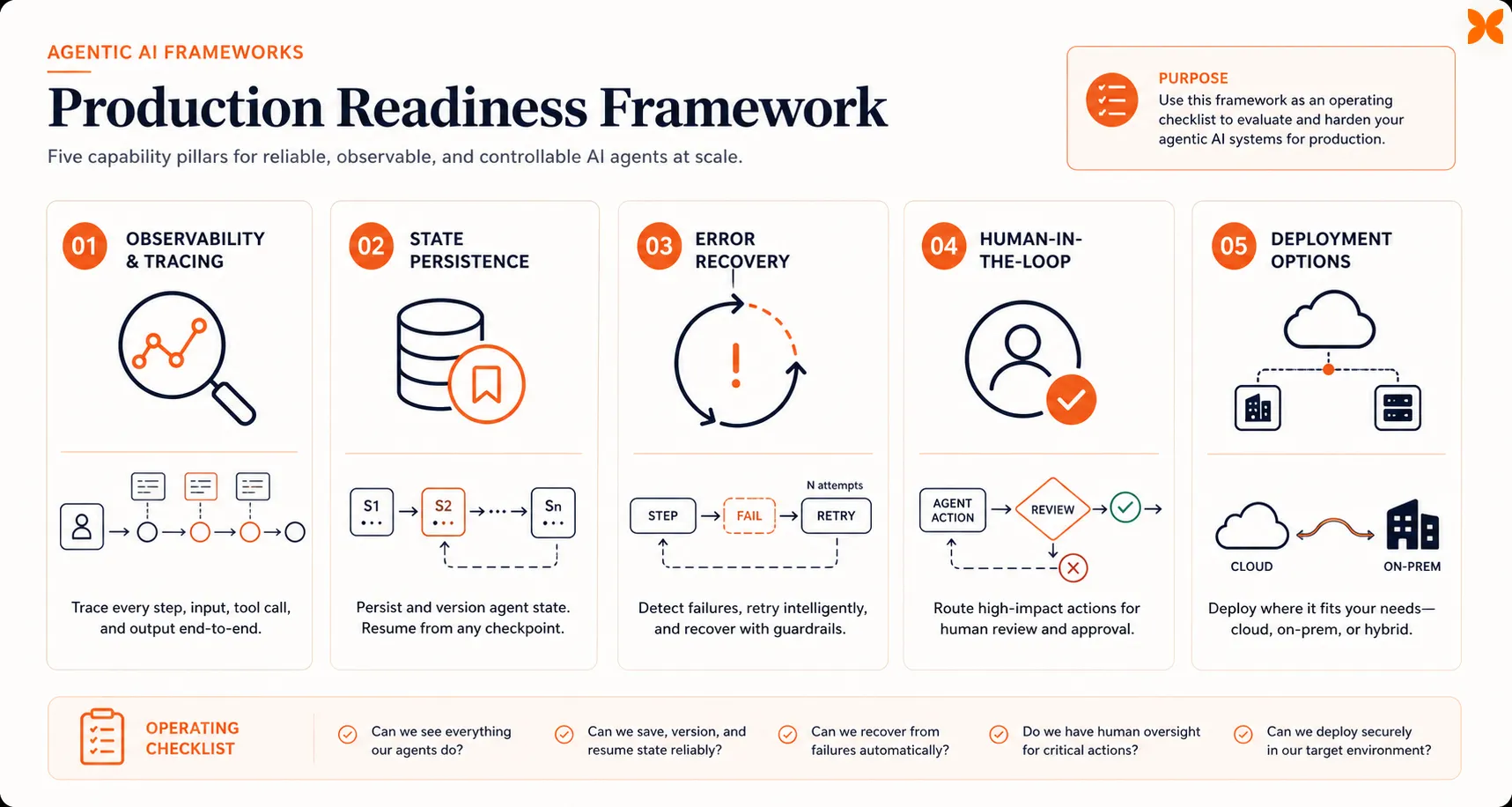

What Production Readiness Actually Looks Like

Framework feature lists can be misleading. A framework might claim “multi-agent support” and “tool calling” while offering no observability, no error recovery, and no way to checkpoint long-running workflows. When Gartner predicts that over 40% of agentic AI projects will be canceled by 2027 due to escalating costs and inadequate risk controls, the details of production readiness become critical.

Here are five things to evaluate before committing to a framework for production use.

Observability and Tracing

When an agent fails or produces a wrong answer, can you see exactly which step went wrong, what inputs it received, and what decision it made? LangGraph integrates with LangSmith for step-by-step traces with token counts per node. Microsoft Agent Framework provides telemetry through Azure Monitor. If your framework doesn’t have a clear answer for “how do I debug a failed agent run,” it’s not production-ready.

State Persistence

Production agents often run workflows that span hours or days. Does the framework persist state between steps so a failure at step 7 doesn’t mean restarting from step 1? Graph-based frameworks handle this best by design, since every node transition is a natural checkpointing opportunity.

Error Recovery

What happens when an external tool call times out, an API returns an unexpected response, or the LLM hallucinates in the middle of a workflow? Look for frameworks that support retry logic, fallback paths, and graceful degradation rather than crashing the entire workflow.

Human-in-the-Loop Support

Autonomous doesn’t mean unsupervised. The most production-ready frameworks let you insert approval checkpoints at specific workflow steps, route sensitive decisions to a human reviewer, and resume agent execution after human input. If you’re building agents for business workflows , human oversight isn’t optional.

Deployment Options

Can you run the framework in your existing infrastructure? Some frameworks require specific cloud platforms; others can be self-hosted or deployed as serverless functions. Consider whether you need managed hosting, private cloud support, or the ability to run agents on-premises for compliance reasons.

These criteria matter more than any feature comparison table. A framework that excels on all five dimensions with a steep learning curve is usually a better long-term investment than one that’s easy to set up but becomes painful to operate at scale.

Once you’ve assessed both orchestration style and production readiness, the final step is putting it all together into a concrete decision.

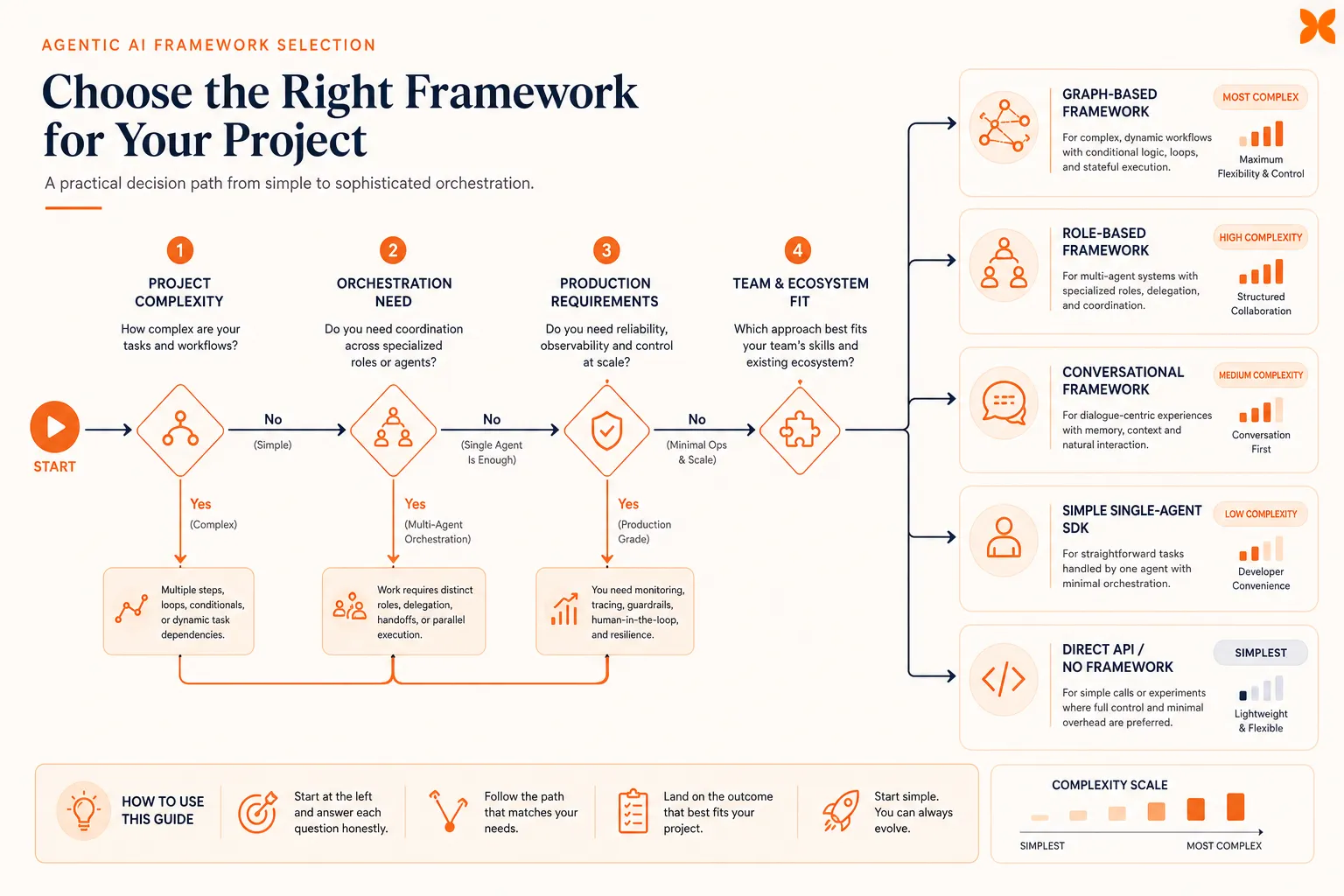

How to Pick the Right Framework for Your Project

Here’s a practical decision path you can follow:

-

Define what your agent needs to do. Is it a single agent with a few tools? A multi-step workflow with branching logic? Multiple agents collaborating? The complexity of your use case determines how much orchestration you need.

-

Match your use case to an orchestration style.

- If you need deterministic, auditable workflows with complex branching: graph-based (LangGraph)

- If you want fast prototyping with team-based agent roles: role-based (CrewAI)

- If your task benefits from debate, consensus, or iterative reasoning: conversational (Microsoft Agent Framework)

- If you’re building a simple agent with OpenAI models and don’t need multi-agent coordination: OpenAI Agents SDK

- If your agent’s primary job is retrieving and synthesizing information from documents: LlamaIndex

-

Check your production requirements. If this will run in production, evaluate against the five production readiness criteria above. If it’s a prototype or internal tool, you can be more flexible.

-

Consider your team’s ecosystem. Your team’s primary language (Python, .NET, TypeScript) and cloud platform (Azure, AWS, GCP) will narrow the options further. Microsoft Agent Framework is the natural choice for .NET shops. LangGraph and CrewAI are Python-first.

-

Start simple and migrate up. Don’t start with the most complex framework because you think you’ll need it someday. Start with the simplest option that solves your current problem. Direct API calls, simple chains, or a lightweight framework like CrewAI for prototyping. When you hit real limits (not hypothetical ones), you’ll have enough context to make a better framework decision.

One more thing: you don’t always need a framework at all. For simple single-agent tasks with straightforward tool calling, direct API integration is often enough. Frameworks add value when you need orchestration, memory, or multi-agent coordination. If you don’t need those yet, skip the framework overhead.

And if you’d rather use AI agents without building from scratch, Craze is an AI platform where you can build agents, run workflows, and schedule automations using any model, no framework or orchestration code required.

Conclusion

The right agentic AI framework isn’t the one with the most GitHub stars or the longest feature list. It’s the one that matches your orchestration needs, meets your production requirements, and fits your team’s skills and ecosystem.

Start by identifying your orchestration pattern. Then evaluate production readiness. Then check ecosystem fit. This order saves you from the most common mistake in framework selection: picking a popular framework that solves a different problem than the one you actually have.

The framework landscape is consolidating. Microsoft’s Agent Framework merger, MCP standardization across all major frameworks, and the growing maturity of LangGraph and CrewAI mean there are fewer bad choices today than there were a year ago. But there are still wrong choices for specific situations, and the cost of switching frameworks mid-project is significant.

Pick the simplest thing that works. Build something real with it. Upgrade when your real constraints demand it, not when a new framework launches.

FAQs

What is the best framework for agentic AI?

There's no single best framework. LangGraph leads for production systems that need deterministic control, auditability, and observability, with roughly 400 companies running it in production. CrewAI is the fastest path to a working multi-agent prototype. Microsoft Agent Framework (GA April 2026) is the strongest choice for teams in the Azure/.NET ecosystem. The best framework depends on your orchestration style, production requirements, and team skills.

What are the four pillars of agentic AI?

The four core components that make AI agents agentic are: reasoning and planning (breaking goals into executable steps), memory management (maintaining context within and across sessions), tool integration (connecting to external APIs, databases, and services), and human-in-the-loop oversight (checkpoints where humans can review, approve, or override agent decisions). Every serious agentic AI framework provides building blocks for all four.

What are the different models of agentic AI?

The main architectural models for agentic AI are defined by orchestration style: graph-based (explicit state machines with nodes and edges, like LangGraph), role-based (agents assigned team roles and tasks, like CrewAI), and conversational (agents coordinating through message passing, like Microsoft Agent Framework). There's also a distinction between single-agent systems (one agent with tools) and multi-agent systems (multiple agents collaborating on a task).

Do I need a framework to build AI agents?

Not always. For simple, single-agent tasks with straightforward tool calling, direct API calls to an LLM provider (OpenAI, Anthropic, Google) are often enough. Frameworks become valuable when you need multi-step orchestration, persistent memory across sessions, coordination between multiple agents, or human-in-the-loop checkpoints. Platforms like Craze also let you build and run agents without choosing a framework or writing orchestration code.

Is AutoGen still maintained in 2026?

AutoGen is in maintenance mode. Microsoft shifted its primary development effort to the unified Microsoft Agent Framework, which merges AutoGen's multi-agent patterns with Semantic Kernel's enterprise features into a single SDK. Agent Framework 1.0 reached GA in April 2026. Existing AutoGen projects have migration paths to the new framework, but new projects should start with Microsoft Agent Framework directly.

More Articles

AI Agent Orchestration: What It Is and Why It Matters

AI agent orchestration coordinates multiple specialized agents to handle complex tasks no single agent can. Learn the patterns, building blocks, failure modes, and how to get started.

AI Agent Use Cases: Where Agents Actually Deliver Results

Explore the highest-impact AI agent use cases across customer support, sales, finance, IT, marketing, and HR, with real workflow patterns and adoption data.

AI Agent vs Chatbot: How They Actually Differ

AI agents and chatbots are not the same thing. Learn the real differences in how they work, what they cost, and which one your team actually needs.